About me

Hi! I'm Eduardo Perdices. I hold a PhD in Computer Vision from the URJC Robotics Group (Spain). Previously, I earned a five-year Bachelor's degree in Computer Science Engineering and a Master's degree in Telematics and Information Systems from Rey Juan Carlos University.

With over 15 years of professional experience, including an early background as a researcher, I currently work as a Computer Vision Engineer at Arcturus Industries.

Research

Visual SLAM

Simultaneous Localization and Mapping (SLAM) is the problem of building a map of an unknown environment while simultaneously tracking the position of an agent within it. Visual SLAM solves this using only camera input, making it a key technology for autonomous robots, drones, and mobile devices. Modern approaches such as ORB-SLAM3 support monocular, stereo, and RGB-D configurations and can operate across a wide range of environments and motion profiles.

I developed SD-SLAM, a semi-direct monocular visual SLAM system optimized for resource-constrained platforms such as drones and smartphones. SD-SLAM combines direct image alignment with feature-based methods to achieve robust tracking at low computational cost, without sacrificing accuracy. The source code is available on GitHub.

The video below demonstrates SD-SLAM running on the TUM RGB-D benchmark dataset:

Augmented Reality

Augmented Reality (AR) overlays digital content onto the real world, and its quality depends entirely on how precisely the device knows its position and orientation in space. Accurate, low-latency 6-DoF tracking is therefore the core challenge in any AR system, and it is where Visual SLAM and AR research naturally intersect.

My work in this area focuses on markerless AR for AR/VR headsets, where tracking is driven by visual features and inertial data from the IMU rather than relying on external markers or dedicated hardware. Fusing visual odometry with IMU measurements improves robustness against fast motion and textureless regions, enabling reliable and immersive experiences on modern headsets.

Deep Learning

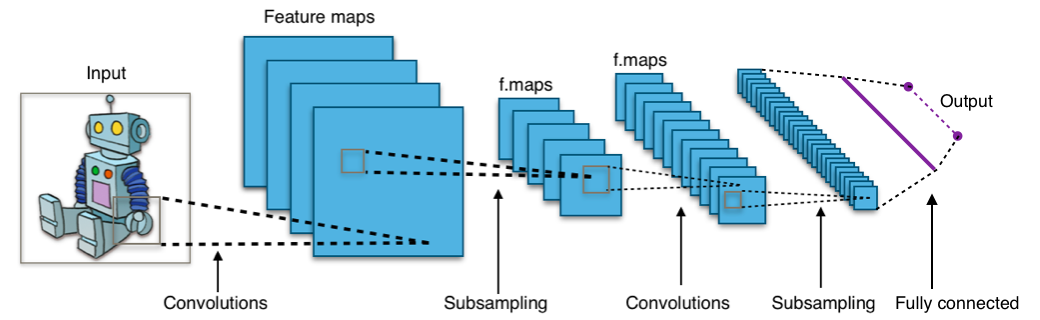

Deep learning has transformed computer vision over the past decade. Convolutional Neural Networks established the foundation for image recognition, and the field has since evolved toward transformer-based architectures, self-supervised pre-training, and large vision-language models capable of open-vocabulary understanding. Techniques such as Neural Radiance Fields (NeRF) and 3D Gaussian Splatting have also opened new routes to scene reconstruction from images alone.

My deep learning research focuses on 3D object recognition and on integrating learned representations into geometric pipelines. A particular interest is leveraging neural networks to improve the robustness and accuracy of visual SLAM — for example, using learned depth estimation or semantic segmentation to guide mapping and relocalization in challenging conditions.

Publications

Journal Citation Reports (JCR)

-

Sensors 2019, 19(2), 302. MDPI. DOI: 10.3390/s19020302.

https://www.mdpi.com/1424-8220/19/2/302 -

Revista Iberoamericana de Automática e Informática Industrial (RIAI), Vol. 15, 4, 2018, pp. 404–415.

https://polipapers.upv.es/index.php/RIAI/article/view/8962 -

Sensors, 2013, 13(1), 1268–1299.

http://www.mdpi.com/1424-8220/13/1/1268

Books and Book Chapters

-

Robotic Vision: Technologies for Machine Learning and Vision Applications. IGI Global, pp. 406–436, 2012. ISBN: 978-146-662-672-0.

http://www.igi-global.com/chapter/attentive-visual-memory-robot-localization/73201 -

Robot Soccer, ed. Vladan Papic. IN-TECH, pp. 67–100, 2010. ISBN: 978-953-307-036-0.

Journals

-

Applied Sciences 10(21), 7419, MDPI 2020. DOI: https://doi.org/10.3390/app10217419.

https://www.mdpi.com/2076-3417/10/21/7419 -

Journal of Physical Agents, Vol. 6, No. 1, pp. 21–30, 2012. ISSN: 1888-0258.

-

Journal of Physical Agents, Vol. 4, No. 1, pp. 11–18, 2010. ISSN: 1888-0258.

Conference Papers

-

WAF2020 Physical Agents Workshop, November 2020. DOI: 10.1007/978-3-030-62579-5_20. pp. 291–304. Springer.

-

Robot2013 First Iberian Robotics Conference, Madrid, November 2013. pp. 663–678. Springer.

-

Robot2013 First Iberian Robotics Conference, Madrid, November 2013. pp. 541–556. Springer.

-

RoboCity2030 11th Workshop, pp. 71–94. U. Carlos III, March 2013. ISBN: 978-84-695-7212-2.

-

2012 IEEE Intelligent Vehicles Symposium Workshops, Alcalá de Henares, June 2012. ISBN: 978-84-695-3472-4.

-

WAF2011 XII Physical Agents Workshop, pp. 87–94. Albacete, September 2011. ISBN: 978-84-694-6730-5.

-

5th Workshop on Humanoids Soccer Robots, pp. 29–34. Nashville, December 2010. ISBN: 978-88-95872-09-4.

-

RoboCity2030 7th Workshop, pp. 129–148. Móstoles, October 2010. ISBN: 978-84-693-6777-3.

-

RoboCity2030 7th Workshop, pp. 107–127. Móstoles, October 2010. ISBN: 978-84-693-6777-3.

-

WAF2010 XI Physical Agents Workshop. Valencia, September 2010. ISBN: 978-84-92812-54-7.

-

WAF2009 X Physical Agents Workshop, pp. 121–128. Cáceres, September 2009. ISBN: 978-84-692-3220-0.

Theses

-

PhD in Advanced Hardware and Software Systems, Rey Juan Carlos University, July 2017.

-

Master's Degree Project (Telematics and Information Systems), Rey Juan Carlos University, July 2010.

-

Bachelor's Degree Project (Computer Science and Engineering), Rey Juan Carlos University, September 2009.

Talks

-

Master's Degree in Computer Vision, Rey Juan Carlos University, April 2016.

-

Robotics Group, Rey Juan Carlos University, March 2013.

-

IEEE Intelligent Vehicles Symposium Workshops, Perception in Robotics, June 2012.

-

RoboCup team meeting, Rey Juan Carlos University, December 2009.