Table of contents

- 2010.08.27. Corner detection using real images with and without Gabor Filters

- 2010.08.18. Corner detection using Gabor filters

- 2010.08.12. Using Gabor Filters to detect basic shapes

- 2010.07.13. Log-polar images and movement analysis

- 2010.03.26. Designed new GUI

- 2010.03.23. Pioneer following arrows from one side to the opposite

- 2010.03.23. Space recognition with a spiral movement, with a big threshold

- 2010.03.12. Space recognition with a spiral movement

- 2010.03.10. Security window

- 2010.02.26. Consistent 3D memory

- 2010.02.25. Pioneer around lab, recognizing environment

- 2010.02.17. Pioneer around lab following arrows

- 2010.02.04. Algorithm following arrows

- 2010.02.01. Algorithm detect and pay attention to arrows

2010.08.27. Corner detection using real images with and without Gabor Filters

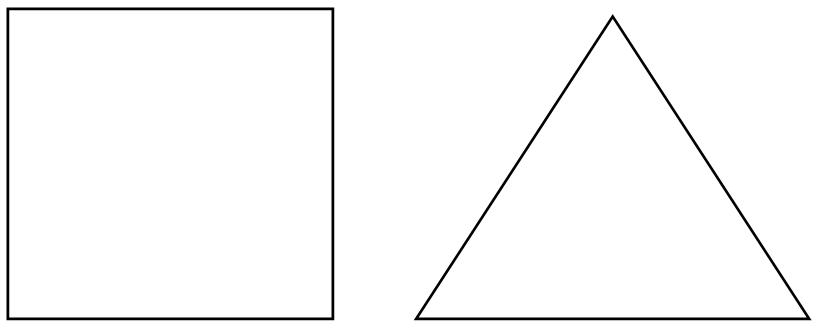

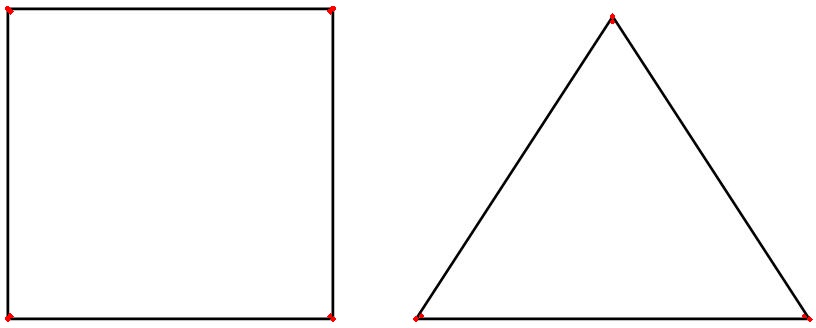

As we're able to see on the last work, I'm using Gabor filters to detect borders in a specific orientation combination, and with a single scale. That way, when I use FAST algorithm to detect corners, I only get the corners under these specifications. The only reason to use FAST to detect corners is because is quite precise to do that. We can see this example:

a) Original image:

b) FAST cornered image (red marks):

My main propose is to show the difference between:

- Use FAST over Gabor filtered image.

- Use FAST over original image.

On this experiment, we're using a real Firewire camera to get the images in real time. The results are the followings:

a) Original image:

b) Gabor filtered image (orientations: 0º x 90º):

c) FAST over Gabor filtered image:

d) FAST over original image:

The main difference between using or not Gabor Filters is awesome: c-example has only desirable corners, but d-example has too much noise.

2010.08.18. Corner detection using Gabor filters

As I've described on last entry, I'm using Gabor filters in order to detect borders. But I've realized I can not use the forty solutions for use that as a real-time solution. So, I've consider just a single scale (so we could say: the robot has myopia) and a pair of orientations in order to detect some corners and then we will able to detect basic shapes.

Here we have the original image:

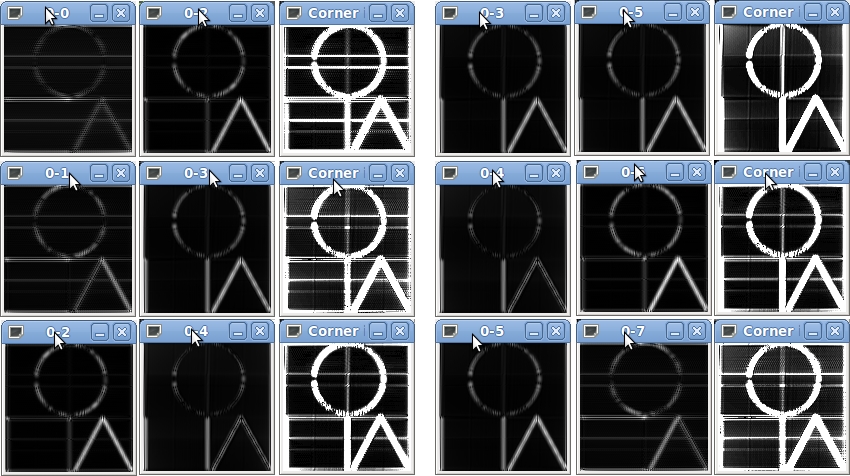

And here we can see the results when we're using a single scale and 6 orientation combinations (0-90, 45-135, 90-180, ...).

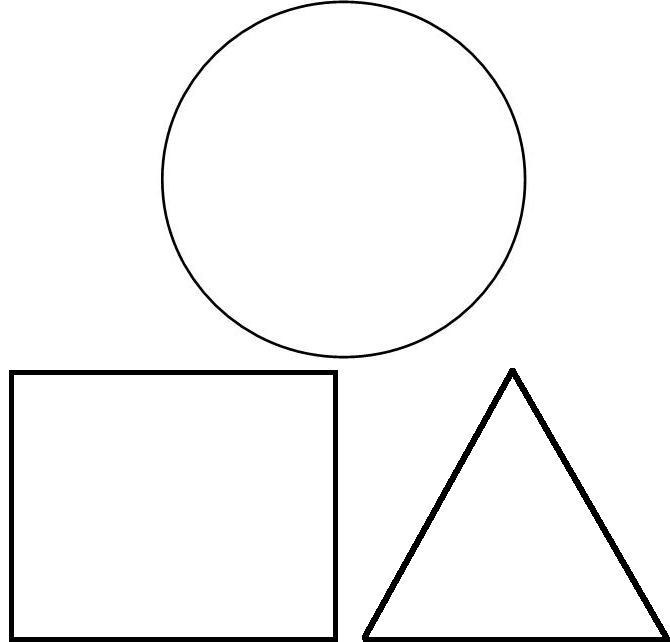

2010.08.12. Using Gabor Filters to detect basic shapes

I've implemented a Gabor filters system which I'm going to use to detect basic shapes (squares, triangles, circles). In image processing, a Gabor filter is a linear filter typically used for edge detection. Frequency and orientation representations of Gabor filter are similar to those of human visual system, and it has been found to be particularly appropriate for texture representation and discrimination. In the spatial domain, a 2D Gabor filter is a Gaussian kernel function modulated by a sinusoidal plane wave. (Text extracted from Wikipedia.)

Basic Gabor filter form

(Maybe you've to zoom in the image)

So, I get forty filters like this one, but in different orientations and scales: 5 scales x 8 orientations (each of them). That way, I can get different border detection results.

One-scale results

We can get these results using just one scale and different orientations. We can appreciate the differences. (The first picture is the original image.)

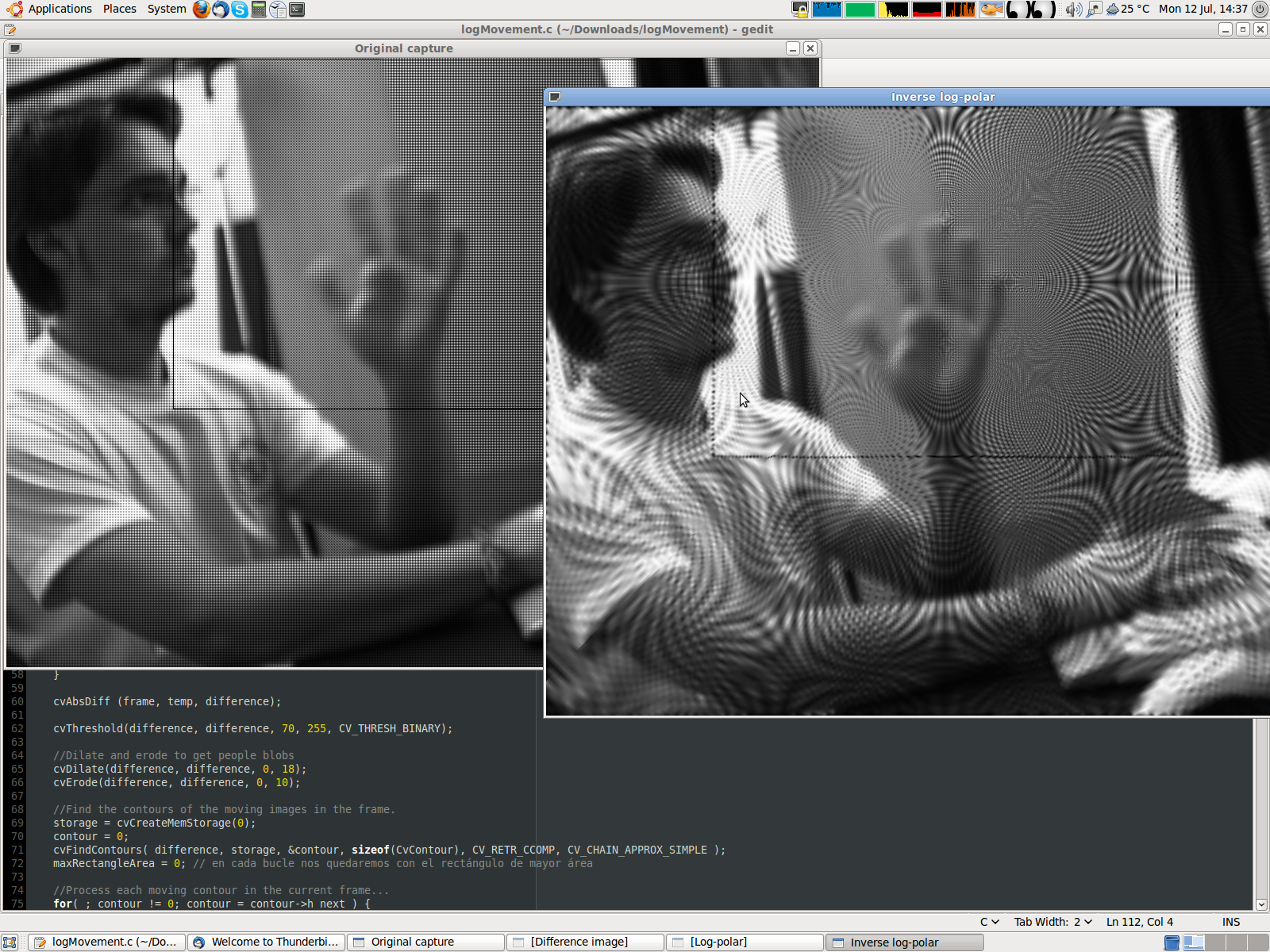

2010.07.13. Log-polar images and movement analysis

In order to do a faster attention system algorithm, we're gonna work with log-polar images. At first, we have a covert attention system guided through the movement. That way, we're simulating the human eye mechanism: retina and fovea, and the basic human eye behavior: we pay attention where we see movement.

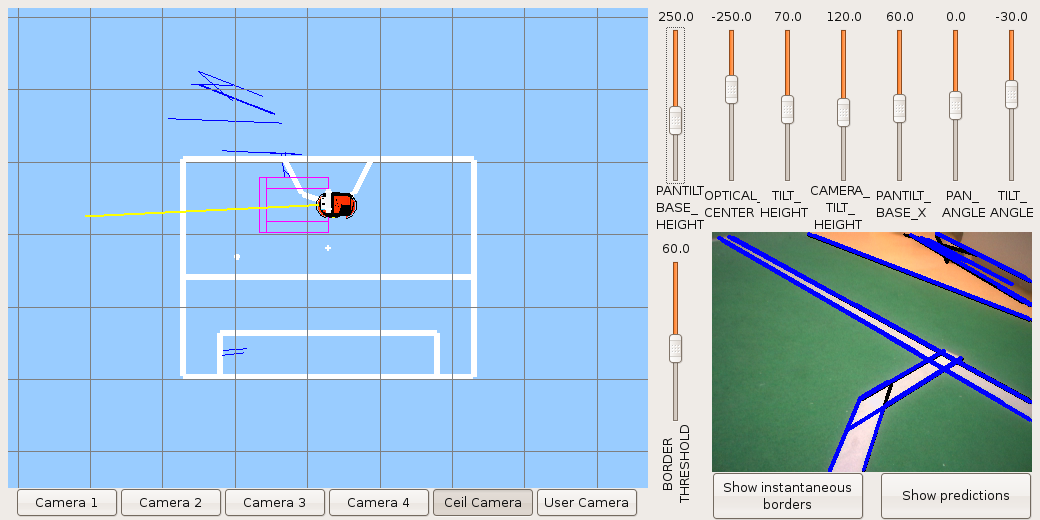

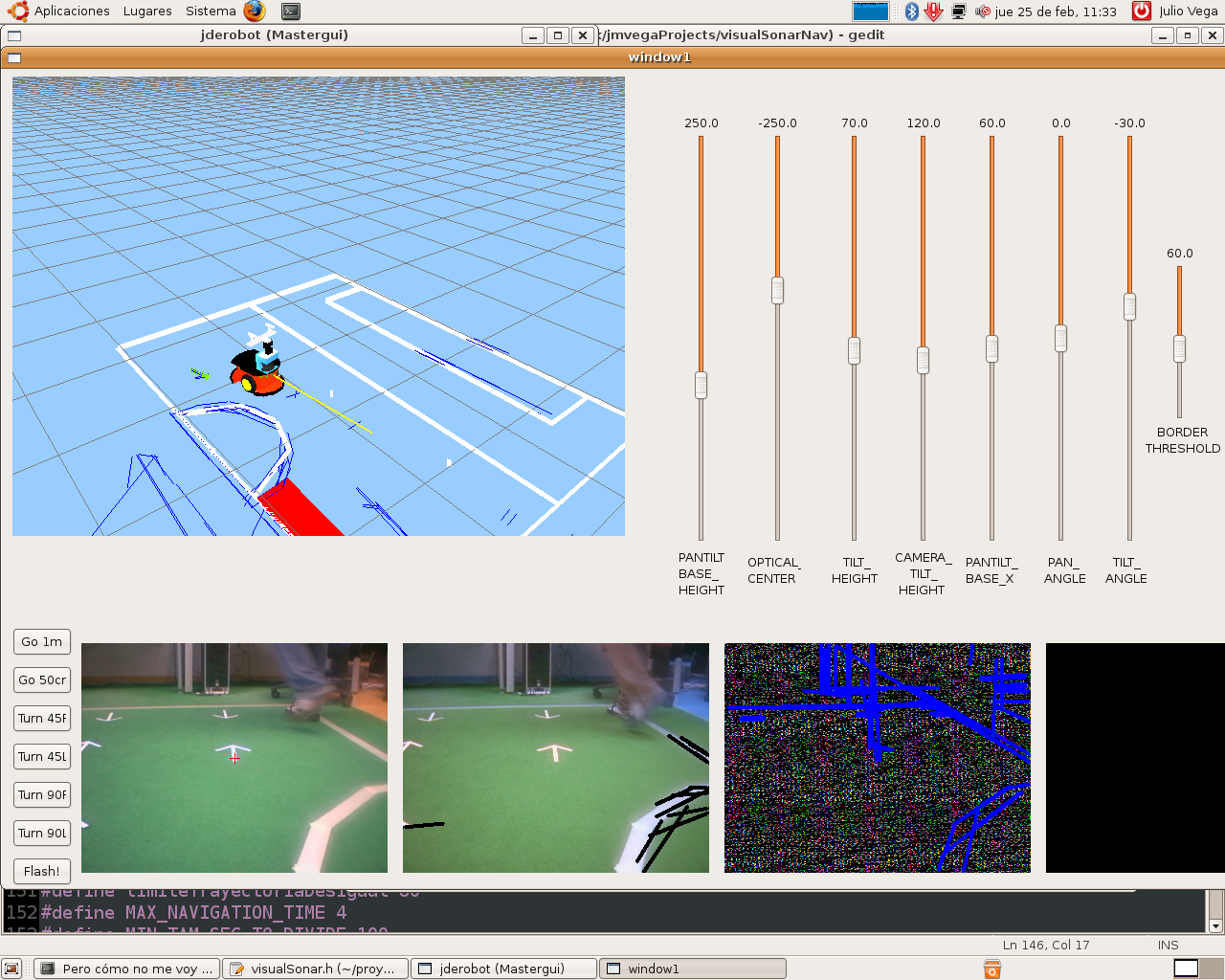

2010.03.26. Designed new GUI

Now, we've a new GUI more useful and easy to see whatever you want to get. Here, I show a screenshot.

2010.03.23. Pioneer following arrows from one side to the opposite

On this video, we show the Pioneer behavior with our attention system. It has to follow arrows located above Robocup football field, with a movement similar to famous Ping-Pong game.

2010.03.23. Space recognition with a spiral movement, with a big threshold

Here, we do the same experiment as the previous one, but now we want to produce continuous lines. So we've had to increase the parallelism threshold in order to fusion themselves. But the main problem is the noise we get in this case.

2010.03.12. Space recognition with a spiral movement

On this video we see the robot spiral movement through the Robocup football court. How we can expect, it has odometry errors in its estimations. Anyway, the memory is coherent around the robot, correcting previous and erroneous estimations.

2010.03.10. Security window

Security Window has been incorporated to the robot. We can see the 3D representation.

2010.02.26. Consistent 3D memory

Now we can see how robot 3D memory is consistent with the real environment.

2010.02.25. Pioneer around lab, recognizing environment

Here we can see representation inside robot 3D-memory, while it has been moving a few minutes. It an amazing image because odometry errors aren't significant. Segments estimation and real segments fit properly.

2010.02.17. Pioneer around lab following arrows

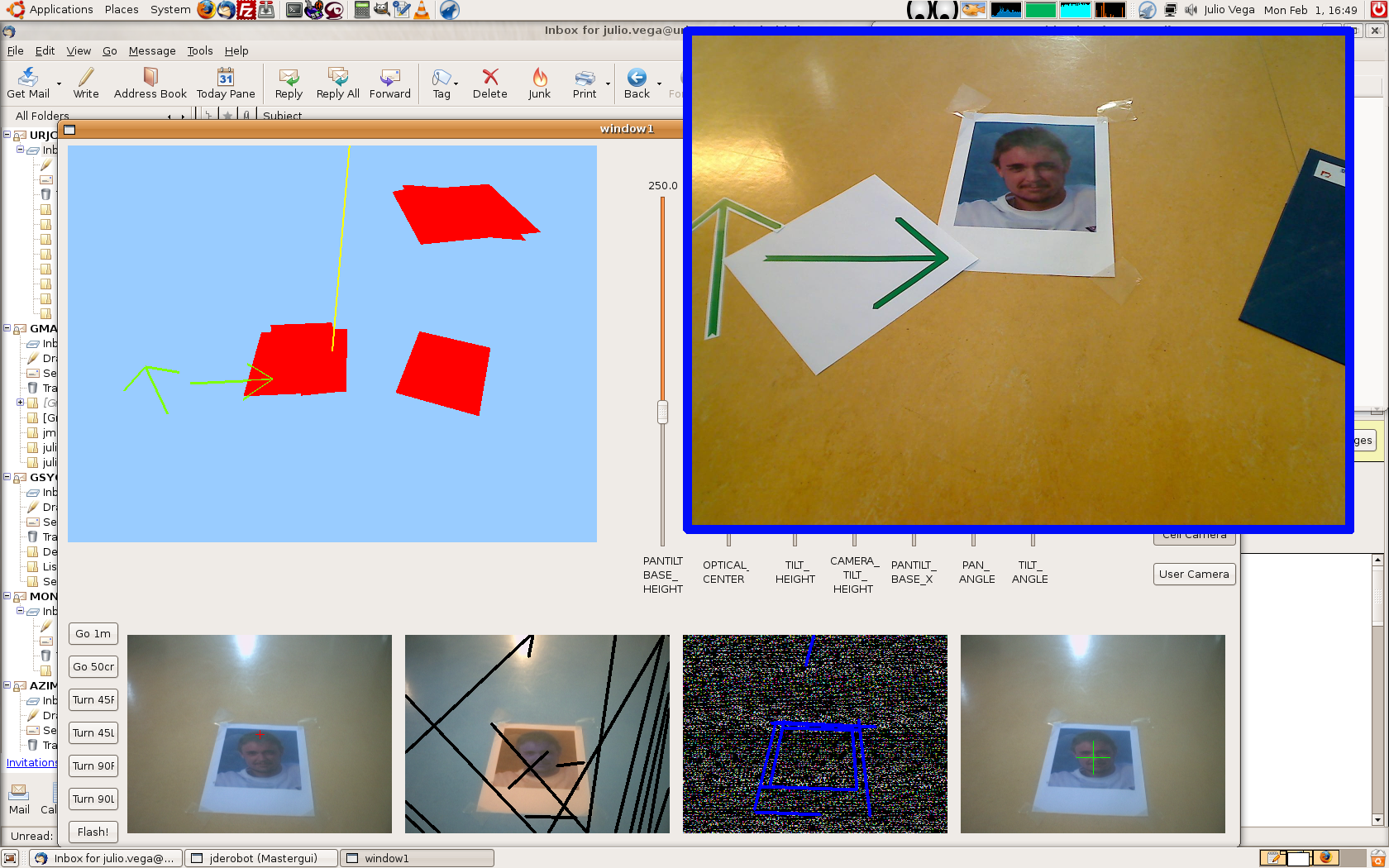

In these videos we can see how our robot go around lab avoiding obstacles, following arrows and detecting faces. The attention system is well build, so robot only pay attention those things it considers important for its navigation. While robot is moving, it goes recovering information around environment and doing its memory.

In the first video, we see Pioneer robot from a exterior camera. In the second one, we see the robot memory, from the on board camera.

2010.02.04. Algorithm following arrows

In this video, our algorithm is detecting all objects around robot. Now, arrows let robot knows where is the goal. When there are many arrows around the robot, only the nearest arrow is the main influence over robot navigation.

2010.02.01. Algorithm detect and pay attention to arrows

Now, algorithm's able to detect faces, parallelograms and arrows, with too much noise. That way, our robot will able to navigate following these landmarks.

![Julio Vega's home page [Julio Vega's home page]](https://gsyc.urjc.es/jmvega/figs/cabecera.jpg)